Single Source Shortest Path Algorithm and to Improve its Performance to use in Routing Protocols

2.1 Abstract:

The single-source shortest path algorithms (such as Dijkstra’s and Bellman-Ford algorithms) are designed for computing the shortest-paths from a single source. They are used as the core building blocks for designing routing protocols in communication networks. The standard textbook formulation of these algorithms stops at computing the shortest-paths and the Minimum Spanning Tree (MST) of the network graph rooted at the source. In real life, however, the network is not stationary; the costs of the links change due to either the improvement/deterioration of links or change in the traffic on them. Hence, the algorithms need to be adaptable to changing dynamics. In this section the single source shortest path algorithms namely Bellman Ford and Dijikstra algorithm are studied. The purpose of the study is to choose one algorithm among the two and mentioning why the same has been chosen for extension to suit the network dynamics. Also this section will provide a data structure to be used and modify the SSSP algorithm so that the same can be used in dynamic changing networks without decreasing its performance where every tasks needs to take place in milliseconds or even in nanoseconds. To solve this problem I had considered a network as a weighted graph representing a network of nodes connected by links where the edge weights can change over time. Without loss of much generality I had assumed that not more than one edge weight changes at a time. This experiment report is organised into two sections 2.2 and 2.3 .Further section 2.3 is again subdivided into various subsections from 2.3.1 to 2.3.4. Here,

- I studied two algorithms to calculate the shortest path namely the Bellman Ford and the Dijkstra’s algorithm and given my argument why I had chosen the Dijkstra’s algorithm and why it is better than the Bellman Ford in section 2.2 to handle the problem mentioned here.

- I studied the two ways of storing the graphs to solve the SSSP problem. One is by using adjacency matrix in section 2.3.1 and other is using adjacency list in section 2.3.2 and explains why I had chosen Adjacency List representation over Adjacency Matrix

- I studied the performance of Dijkstra’s algorithm by using Fibonacci heaps (implemented using OOP concept of C++ on windows) using delete min and decrease key function in section 2.3.3 and explains why I have chosen the Fibonacci Heap.

- I also studied on how to minimize the complete Dijkstra’s algorithm run over the entire nodes in the network graph, whenever the edge weight changes i.e. if any edge weight is added, deleted or if the edge weight increases and decreases. Here I had used Hash map (implemented in C on Windows) and vectors (implemented using C on Windows) and this report will say how I had used hash map to store the SPT and vectors to store the edges in the SPT to solve this issue and found that the running time is almost decreased by 50% from the original implementation of Dijkstra’s algorithm in section 2.3.4. Here even I suggest what more can be the solution for storage of the SPT to solve the issue.

2.2 Introduction:

The shortest path problems are a classical and well studied algorithmic problem of computer science. This problem requires processing of a given graph G = (V, E) on n = |V| vertices and m = |E| edges to compute a data structure using which shortest path or distance between any two vertices can be efficiently calculated. The shortest path problem is to find the shortest path from s to t for each member [s, t] of a given collection of vertex pairs. The graph used for shortest path calculation is weighted, directed graph with weight function w: E -> R mapping edges to real valued weights. The weight w (p) of path p = (vo, v1… vk) is the sum of the weights of its constituent edges ( Cormen 2009 ) :

k

W (p) = å w (vi-1, vi).

i=1

Two famous and thoroughly studied problems are SSSP (Single Source Shortest Paths) and APSP (All Pair Shortest Paths) problem. Most of the applications of the shortest paths problem involve real life graphs and networks which are prone to failure of nodes (vertices) and links (edges). In this section I will focus on single-source shortest-paths problem. The two most commonly used SSSP algorithms are Dijikstra Algorithm and Bellman Ford algorithm.

The BFS to calculate the shortest path for weighted graphs is not possible because we cannot guarantee that the vertex at the front of the queue is the vertex closest to s. Obviously it is the closest in terms of the number of edges you traverse to reach a destination, but it is not the closest in terms of sum of edge weights. So for weighted graphs Dijkstra’s algorithm or Bellman ford Algorithm is used.

Bellman Ford Algorithm:

Bellman Ford algorithm solves the single-source shortest-paths problem in the general case in which edge weights may be negative. Given a weighted, directed graph G = (V, E) with source s and weight function w : E → R, the Bellman-Ford algorithm returns a Boolean value indicating whether or not there is a negative-weight cycle that is reachable from the source. If there is such a cycle, the algorithm indicates that no solution exists. If there is no such cycle, the algorithm produces the shortest paths and their weights ( Cormen 2009 ) . It uses the relaxation technique progressively decreasing an estimate d[v] on the weights of the shortest path from the source s to each vertex v Î V until it achieves the actual shortest path weight d(s, t). Bellman Ford detects the negative cycles (returns false) or returns the SPT (shortest path tree).

BELLMAN-FORD (G, w, s) ( Cormen 2009 )

1 INITIALIZE-SINGLE-SOURCE (G, s)

2 for i ← 1 to |V [G]| – 1

3 do for each edge (u, v) Î E [G]

4 do RELAX (u, v, and w)

5 for each edge (u, v) Î E [G]

6 do if d[v] > d[u] + w (u, v)

7 then return FALSE

8 return TRUE

Relaxing an edge (u, v) means testing whether we can improve the shortest path to v found so far by going through u.

Analysis: The Bellman Ford algorithm runs in time O (VE), as the initialization in Line 1 takes Q (V) times. Instead of greedily selecting the minimum weight node not yet processed to relax, it simply relaxes all the edges and does this in |V| -1 times as in Lines 2-4 each of passes over the edges takes Q(E) times and for loop of lines 5-7 takes O(E) time.

When the algorithm is used to find shortest paths, the existence of negative cycles is a problem, preventing the algorithm from finding a correct answer. However, since it terminates upon finding a negative cycle, the Bellman-Ford algorithm can be used for applications in which this is the target to be sought – for example in cycle-cancelling techniques in network flow analysis. (Bang et al 3rd edn)

This algorithm is used in distance vector routing protocols for example in RIP (Routing Information Protocol).It allows negative weight edges but no negative cost cycles.

Dijkstra’s Algorithm:

Dijkstra’s algorithm is called the single-source shortest path. It is also known as the single source shortest path problem. It computes length of the shortest path from the source to each of the remaining vertices in the graph. It is a Greedy Algorithm. Greedy algorithms use problem solving methods based on actions to see if there’s a better long term strategy. It uses the Greedy approach to solve the single source shortest problem. It repeatedly selects from the unselected vertices, vertex v nearest to source s and declares the distance to be the actual shortest distance from s to v. The edges of v are then checked to see if their destination can be reached by v followed by the relevant outgoing edges. Dijkstra’s algorithm works by solving the sub problem k, which computes the shortest path from the source to vertices among the k closest vertices to the source. For the Dijkstra’s algorithm to work it should be directed- weighted graph and the edges should be non negative. If the edges are negative then the actual shortest path cannot be obtained. It maintains a set S of vertices whose final shortest path weights from the source s have already been determined. The algorithm repeatedly selects the vertex u Î V-S with minimum shortest path estimate, adds u to S and relaxes all edges leaving u ( Cormen 2009 ) . It’s like BFS if all the edge weights of a graph is 1.

Algorithm DijkstraDistances (G, s)

Q ← new heap-based priority queue

1 for all v ∈G. Vertices ()

2 if v = s

3 setDistance (v, 0)

4 else

5 setDistance (v, ∞)

6 l ← Q.insert (getDistance (v), v)

7 setLocator (v,l)

8 while ¬Q.isEmpty ()

9 u ← Q.removeMin ()

10 for all e ∈G.incidentEdges (u)

11 {relax edge e}

12 z ← G.opposite (u, e)

13 r ← getDistance (u) + weight (e)

14 if r < getDistance (z)

15 setDistance (z, r)

16 Q.replaceKey (getLocator (z), r)

Analysis: It has a graph operation of incident edges line 10 is called once for each vertex. We set and get distance (line 3,5,13,14,15,7) and locator labels of vertex z in O (deg (z)) times and this label operations takes O(1) time. The algorithm has some priority queue operations of inserting and removing vertex once from the priority queue where each insertions and removal takes O (log V) time. The key of a vertex in the priority queue is modified at most deg times, where each key change takes O (log V) time. Dijikstra algorithm runs in O ((V + E) log V) time provided the graph is represented by adjacency list structure. Since the graph is connected the running time can also be expressed as O (E log V) time where E is the number of Edges and V is the number of vertices. We can in fact achieve a running time of O (V log V + E) by implementing the priority queue with a Fibonacci heap. (Tarjan 1987).

Dijkstra’s and Bellman Ford similarities and differences:

Both the algorithms are used to find the shortest path of a network where the value of the shortest path may vary according to the needs of the network. There may be a length or cost problem that is needed to be solved. From the perspective of finding the result, these two algorithms satisfy the same expectations.

However, there are some differences which occur during the deciding procedure of vertexes that will be determined for finding the shortest path. If there are negative values in the pre-defined paths of a specific network, Dijkstra’s algorithm is not useful to find the suitable shortest path.

However, Bellman-Ford algorithm can handle negative weights/lengths and continue to complete the process unless there is a complete negative weighted cycle which is reachable from the source vertex in the network.

The vertexes in Dijkstra’s algorithm contain the whole information of a network. There is no such thing that every vertex only cares about itself and its neighbours. But Bellman-Ford algorithm’s nodes contain only the information that is related to. This information allows that node just to know about which neighbour nodes can it connect and the node that the relation come from, mutually.

Dijkstra’s algorithm is faster than Bellman-Ford’s algorithm however the second algorithm can be more useful to solve some problems, such as negative weights of paths.

Dijkstra’s algorithm is faster and more widely used, as in most real-world graphs all edge costs are non negative. The main disadvantages of Bellman–Ford algorithm is (Gupta et al., Eastern Economy edn)

- Does not scale well

- Changes in network topology are not reflected quickly since updates are spread node-by-node.

- Counting to infinity (if link or node failures render anode unreachable from some set of other nodes, those nodes may spend forever gradually increasing their estimates of the distance to it, and in the meantime there may be routing loops)

Bellman Ford algorithm is not recommended for larger networks. Count to infinity problem which I have explained below.

Figure 2.2: Count To infinity problem (Image courtesy, Computer Networks by A. Tanenbaum) (Tanenbaum 3rd edn)

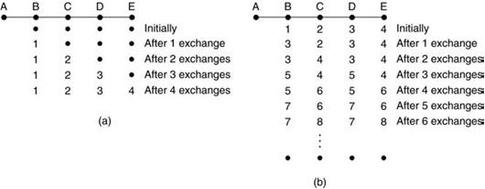

Suppose all nodes have just started up as shown in figure (a). Initially no routes exist as shown by the dots. The figure only explains the routes from A. A receives a routing update from B and A learns that it is just 1 hop away so an entry is made in A’s routing table. B learns a route to C in this same turn from C’s update. It includes this in its next update to A, and hence A learns that C is 1 hop away from B. So, the update is made in A that C is 2 hops away (A to B + B to C). Similarly, A learns that D is 2 hops from B and E is 3 hops, from subsequent updates. Thus A

learns the routes to each node. Now assume that A shuts down. The A-B link goes dead. The metric has become infinity. In this situation when B gets an update from C, it notices that there is a path to A via C and hence updates its routing table (B to C + C to A). This change is reflected in B’s next update. C updates its link to A (A to B + B to C) and so on. As can be seen, the metric will keep on increasing and go on till infinity, and hence the name ‘Count to infinity’. The problem arises because B doesn’t realize that the path to A which C advertised passes through B itself. To resolve this, the maximum hop count is defined which introduces its first disadvantage (large networks). Techniques like split horizon and split horizon with poison reverse are also used to remove this problem.

So by comparing Bellman Ford and Dijkstra’s Algorithm even though Dijkstra’s algorithm cannot handle negative edge weights which is the only disadvantage .Still I will use Dijkstra’s algorithm to extend it to solve the dynamic weight change in network problem because Dijkstra’s algorithm is faster and more widely used, as in most real-world graphs all edge costs are non negative. So this overcomes the disadvantage of Dijkstra’s algorithm as the edge weights of real world network graphs are non negative.

2.3 Implementation of Dijkstra’s Algorithm:

The basic algorithm requires an array to which nodes have been visited, a priority queue to choose which node to visit next and a structure to store the graph. Here we shall use the Dijkstra’s algorithm when the graph is implemented using adjacency matrix and adjacency list and choose the best one to extend upon to provide the solution to the issue.

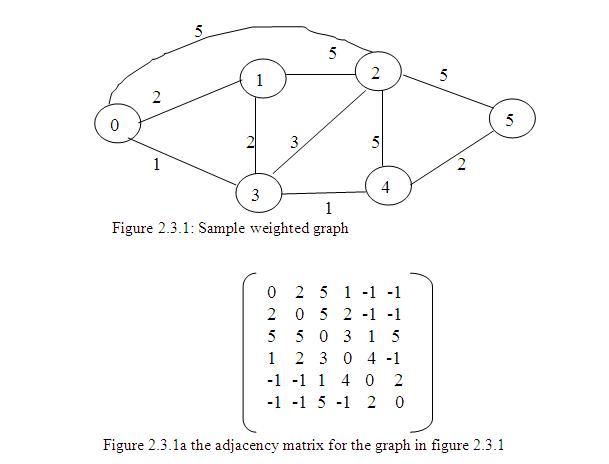

2.3. 1 Dijkstra’s Algorithm when Adjacency Matrix is used to store Graphs

Selecting the right data structure can have a great impact on performance. The two ways to represent a graph are adjacency matrices and by using adjacency list. For this we assume that graph G = (V, E) contains n vertices and m edges (Skiena 2nd edn) . In this section we will study the effect of SSSP algorithm on graph stored in adjacency matrices. Adjacency matrices are another common way of representing graphs, dealing solely with the vertices. An adjacency matrix sets up the vertices on both the vertical and horizontal axes. Vertices that are connected by an edge are marked with a 1. Non-adjacent vertices are marked with a 0. In simple graphs, a vertex is not adjacent to itself. If there are n = |V| vertices v1…vn, this is an n x n array whose (i; j) th entry is

aij = 1 if there is an edge from vi to vj

0 otherwise.

For undirected graphs, the matrix is symmetric since an edge {u; v} can be taken in either direction. The biggest convenience of this format is that the presence of a particular edge can be checked in constant time, with just one memory access. On the other hand the matrix takes up O (n x n) space, which is wasteful if the graph does not have very many edges (Dasgupta et al. 2006) . The adjacency matrix for an undirected graph is symmetric; the adjacency matrix for a digraph need not be symmetric.

Implementation: In this implementation I am reading from a file the edge weights of a graph. In the file the edge weights are mentioned as follows

6

0 2 5 1 -1 -1

2 0 5 2 -1 -1

5 5 0 3 1 5

……….

i.e. first line mention the number of edges and for next line mention the edge weight a source node to destination node ,as from node 0 to node 0 is 0 so the first element is 0 and from 0 to 1 is 2 so please use 2 and so on. And put -1 if there is no connection between two nodes.

The variables I had used here are declared as follows

- int **PATHS this will be used to store the edge weights

- char **ROUTE this will be used to store the shortest path

- int *SHORTEST_PATH this will be used to store the distance of the shortest path

- int *VISITED this will be used to check if the node is visited or not, so that it will not be visited again while finding the shortest path from a source node to a destination node

Reading of the file I am doing it in the function VertexFileBuiltStruct () where i am reading from the file and after that I am initializing the variables which will be used to store the graph and the routes. (For implementation in C see the VertexFileBuiltStruct() in Appendix A2).

The SSSP is then calculated by using the Dijkstra’s algorithm which is very simple, beginning with the source vertex we initialize the source vertex distance to 0 and we do a scan and mark the vertex as scanned and while doing scanning we check if the path over the current vertex is shorter than the previously found one then replace the shorter one. (For implementation in C see the FindTheRoutes () in Appendix A2).

After completing the shortest path calculation I am printing the path from the source to vertex in a file and also here I am calculating the time taken by the algorithm for different size of the graphs. To calculate the time taken by the algorithm in windows I had used GetSystemTimeAsFileTime () API which is available in winbase.h. , and in UNIX we can use gettimeofday () API.

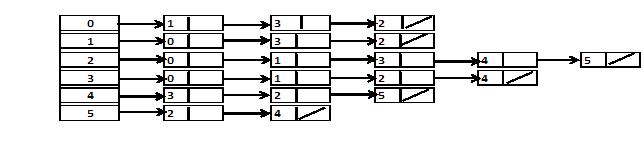

2.3. 2 Dijkstra’s Algorithm when Adjacency List is used to store Graphs

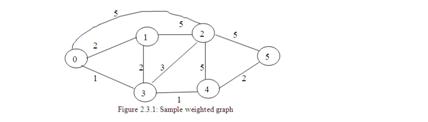

In this representation, each vertex has a list of which vertices it is adjacent to. This causes redundancy in an undirected graph: for example, if vertices A and B are adjacent, A’s adjacency list contains B, while B’s list contains A. Adjacency queries are faster, at the cost of extra storage space. An array Adj of |V | lists, one for each vertex in V. For each u ε V, Adj[u] points to all the vertices adjacent to u. For example for graph in Figure 2.3.1 the adjacency list will be as in figure 2.3.2a

Figure 2.3.2a: Adjacency List representation of the graph

One advantage is, it has O (V + E) storage since the total length of all the lists is 2V (each edge appears twice, once for each of its endpoints) and is good for sparse graph but the disadvantage of it is that it needs to traverse the list to find an edge so the worst case adjacency check will be O (|V|) and the cost of iterating over neighbours is proportional to the degree of the node. It is also quite fast for many applications. The slowest operation that might commonly be used is testing whether a pair of vertices is connected by an edge; this has to be done by scanning through one of the lists, but you could speed it up by sorting the adjacency lists and using binary search. Also this disadvantage can be remedied by an adjacency-matrix representation of the graph, at the cost of using asymptotically more memory ( Cormen 2009 ) . Another disadvantage is that each edge is listed twice, so if the edges carry any extra information such as a length it may be complicated to keep track of both copies and make sure they have the same information.

| n vertices, m edges no parallel edges no self loops Bounds are “big-oh” |

Adjacent List | Adjacent Matrix |

| space | n+m | n x n |

| incidentEdges(v) | deg(v) | n x n |

| areAdjacent(v,w) | min((deg(v),(deg(w)) | 1 |

| insertVertex(o) | 1 | n x n |

| insertEdge(v,w,o) | 1 | 1 |

| removeVertex(v) | deg(v) | n x n |

| removeEdge(e) | 1 | 1 |

Table 2.3.2b asymptotic performance

Implementation: In this implementation I am reading from a file the edge weights of a graph. In the file the edge weights are mentioned as follows

6

0 2 5 1 0 0

2 0 5 2 0 0

5 5 0 3 1 5

……….

i.e. first line mention the number of edges and for next line mention the edge weight a source node to destination node ,as from node 0 to node 0 is 0 so the first element is 0 and from 0 to 1 is 2 so please use 2 and so on. Put 0 when there is no connection between any nodes. The adjacency list I have declared it as

typedef struct node

{

struct node *next;

int vertex,weight;

}node;

node *G[10];//adjacency list

int n;// Number of vertices

After reading the file to populate the adjacency list Then I applied the Dijkstra’s algorithm i.e. dijkstra () to calculate the shortest path and also noted down the running time of various graph sizes. (For implementation in C see the dijkstra() in Appendix B1)

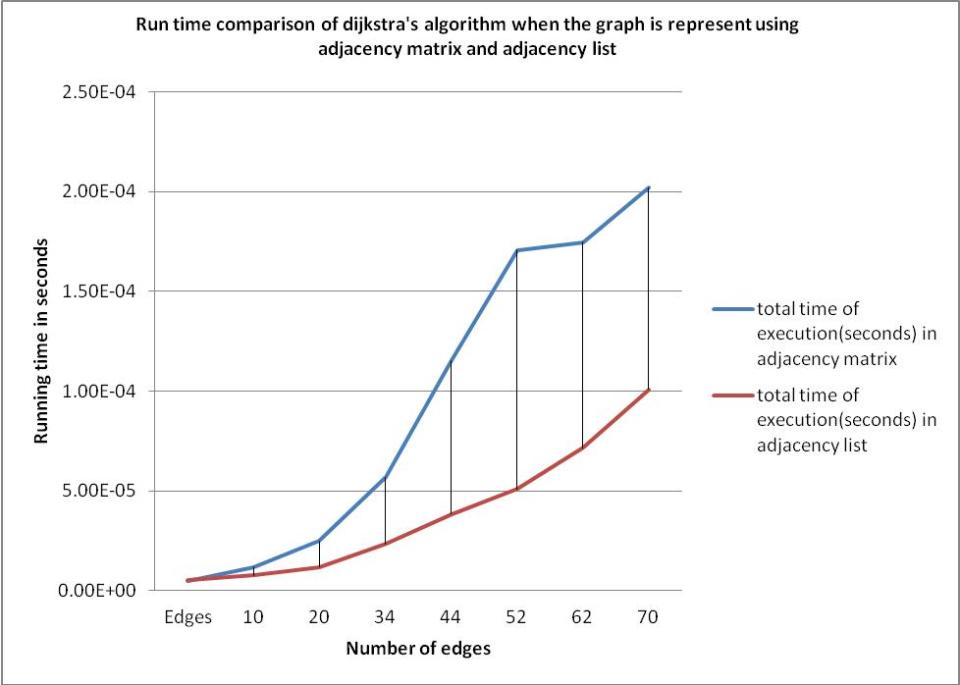

Running Time Analysis of applying Dijkstra’s Algorithm when the graph is represented by Adjacency Matrix and Adjacency List

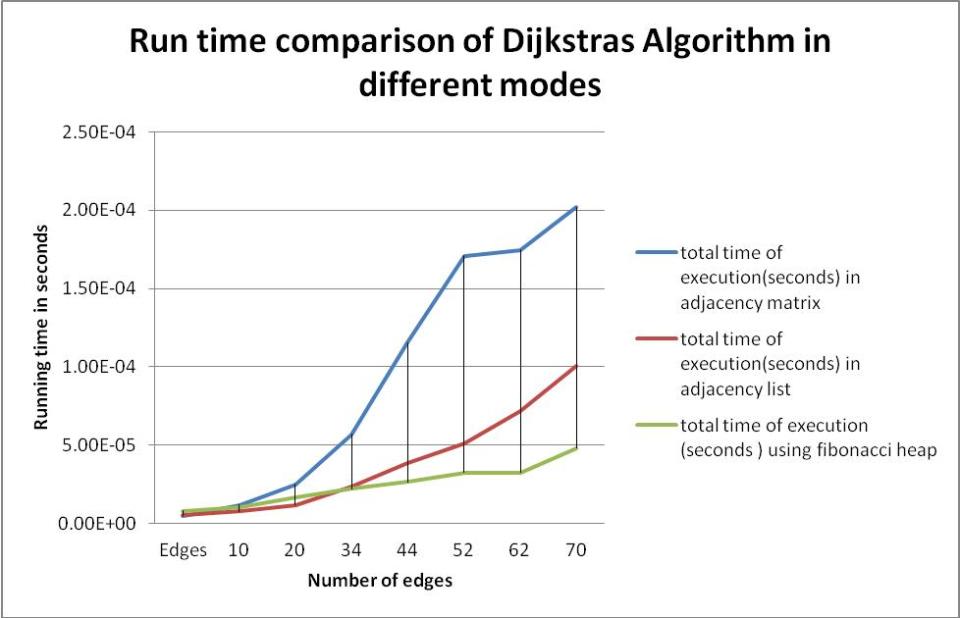

Figure 2.3.2c n(graph size) vs running time comparison of Dijkstra’s algorithm when the graph is represented in adjacency matrix and adjacecny list

So from figure 2.3.2b and from figure 2.3.2c it is clear that graph represented in adjacency list is good for use for extension of the Dijkstra’s algorithm

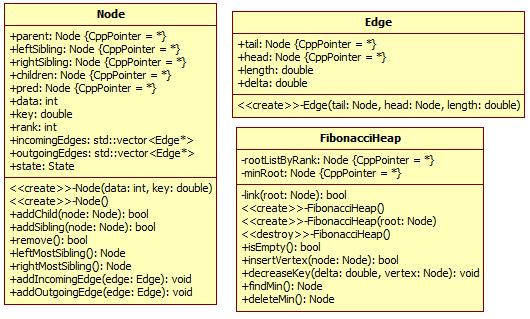

2.3.3 Dijkstra’s Algorithm using Fibonacci Heap as Priority Queue and Adjacency List to store Graph

Implementation (original): The process of removing the minimum vertex from queue requires a delete min operation on the data structure used for queue. Updating the currently best distance requires a decrease key operation and inserting into the queue requires an insert operation. It is important that the data structure used for queue supports these operations with a reasonable time complexity. Heaps are well suited for this as they support all of these operations in reasonable time complexity. The Fibonacci heap support delete min in O (log n) amortized time, and insert and decrease key in O (1) amortized time. For the binary heap the operations delete min, decrease key, and insert all take O (log n) time. First let’s define what is a Fibonacci heap? Fibonacci heap is a heap data structure consisting of collection of trees with a list of root elements. Every node in the heap can have any number of children. Elements are sorted in heap-order: a node does not have a greater key value than the lowest key of its children. Fibonacci heaps are loosely based on Binomial heap but have less rigid structure. Each node x has pointer p[x] to its parent & child [x] to one of its children. Children are linked together in a doubly-linked circular list which is called the child list of x. Each child y in a child list has pointers left[y] and right [y] which points to left and right siblings. If Left[y] == right[y] ==y, then y is the only child. Each node also has degree [x] indicating the number of children of x ( Cormen 2009 ) also knows as the rank. So this means every node in Fibonacci Heap has 4 pointers: 1 to its parent, 2 to its children, and 1 to the data. Since we have an unknown number of children in Fibonacci heaps, we have to arrange the children of a node in a linked list. So, we need at most two pointers to the siblings of every node. This results in a linear double-linked list. Now, we need another pointer to any node of the children list and to the parent of every node. All in all, there are 5 pointers: left sibling, right sibling, parent, children and data.

Now, consider the operations for such a node element which are required for the Fibonacci heap implementation.

The required implementation are addChild (), addSibling () and remove ().

addChild (): Adds a child node to the current node by inserting it into the children list and linking it. This operation takes O (1) time. (For implementation in C++ see Appendix C4)

addSibling (): Adds a node into the children list of the node that the current node belongs to. This operation is done in O (1) time. (For implementation in C++ see Appendix C4)

Remove (): Removes the node from the sibling list and rearranges and refreshes the affected pointers. This is also done in O (1) time. . (For implementation in C++ see Appendix C4)

The basic operations on Fibonacci heaps are:

insertVertex: inserts the node in to the root list and checks if its value is lower than the currently lowest node and changes the access pointer if needed to maintain the minimum heap order property. The time take for this operation is O (1). (For implementation in C++ see Appendix C4)

decreaseKey: The decrease key operation involves decreasing the key of a node, x, by a given amount, cutting the edge joining x and its parent, p, and merging the tree rooted at x back into the root level of the heap. This causes the rank of p to decrease by 1. For the special case where x is already a root node, we just decrease its key. Because the key is decreased, heap order is maintained in all the nodes below x in the tree. So in the implementation in C++ this function decreases the value of the specified node and then the node is removed from the sibling list and is inserted into the root list. This operation needs O (1). (For implementation in C++ see Appendix C4)

findMin: returns the node of the access pointer and this is the node with minimum key. This operation also takes O (1) time. (For implementation in C++ see Appendix C4)

link: If two nodes in the root list have the same rank then the node with the higher key is moved into the children list of the other node. This operation takes O (1). (For implementation in C++ see Appendix C4)

deleteMin: When performing a deleteMin operation, we find the minimum root node and remove it from the heap. The removed root node’s child trees are merged back into the root level of the heap, at the array entry corresponding to its rank. Merging can be thought of as reinserting trees into the heap. Each reinsertion can result in several linking steps. This makes the delete min operation the most time consuming step, with an amortized time complexity of O (log n). This function copies the minimum value node i.e. the node accessed by the access pointer into the root list. After each insertion a linking step is done if necessary and this leads to the minimum element deletion and the node with the minimum key is determined. The mortised time depends on the count of the children of the minimum node and in the worst case for each child a remove, insertion and linking operation is required. (For implementation in C++ see Appendix C4)

After defining the necessary operations of Fibonacci heap I then used this in implementing Dijkstra’s algorithm. Beginning with the source vertex I do a scan and mark it as SCANNED, which means we do a delete min operation and mark the vertex as SCANNED. Then, we repeat this step with the vertices which have an edge with the head on the source vertex i.e. for all incoming edges. Then, we repeat the scan for all vertices which have an edge to the last scanned vertex i.e. if the head of the current edge is not SCANNED and is yet UNLABELLED then we insert the vertex in the heap else if the head of the current edge key which is the weight is greater than the current vertex weight plus the current edge length then do a decrease the key of the vertex with the finite key. During the algorithm, a node can have three states: LABELED, UNLABELED, and SCANNED. Nodes are marked as SCANNED when the shortest path to the target is found. A node is UNLABELED when there is no path found yet (initial state), and LABELED when a path is found, but there may be more shorter path exist. (For implementation in C++ see Appendix C3).

After this algorithm, we have the predecessor for every node in the pred pointer. This pointer points to the next vertex of the shortest path to the target. The result is that we have the shortest path to the target for every vertex. We just have to follow the predecessors:

while (temp)

{

printf (“%d”, temp->data);

temp = temp->pred;

if (temp)

printf (” – “);

}

This will give the shortest path from one node to another node i.e. the shortest path from node 0 to node 2 the above implementation will give output as

0 -> 3 -> 4 -> 2

This structure will give the shortest path tree with the target vertex as the root. For every node we get the parent by the predecessor in that tree.

Now that I have to decide the basic implementation of the Dijkstra’s algorithm to start the extension for solving the issue, I have to see the n vs. running time of all the implementation as shown in fig 2.3.3 a

Figure 2.3.3a Run time comparison of Dijkstra’s algorithm using differenty types

of implementation

From figure 2.3.3a it is clear that the best way to use Dijkstra’s algorithm is to use Fibonacci heap as the Frontier set i.e. the priority queue and the graph should be represented using adjacency list. So I will use this as the base to extend the algorithm to solve the problem mentioned and I will be using C++ on windows to implement this issue, because the Linux virtual machine is not working properly for me and a lot of time is spent on doing research on the issue and so I didn’t find any time to fix the Ubuntu virtual machine. However, since I am able to develop in windows, so it means that I can also able to develop in Linux. So in this implementation where there will be any change in API needed for the particular OS then I will mention it here itself.

2.3.4 Extension of the Algorithm to Improve the Efficiency

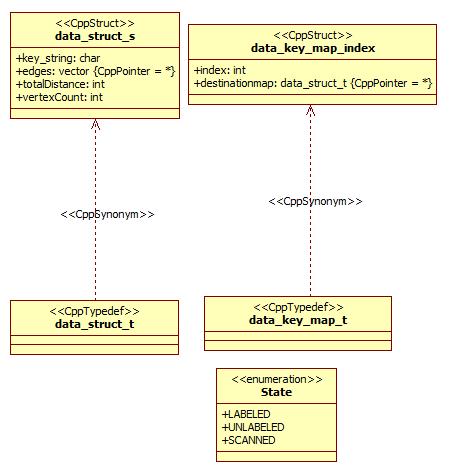

Class diagram of the improvement model

Figure 2.3.4a Class diagram of the data struct used for the hash map

Figure 2.3.4b Class diagram of the Node and Edge for the implementation of the Graph and the Fibonacci heap used as the Frontier set for Dijkstra’s Algorithm

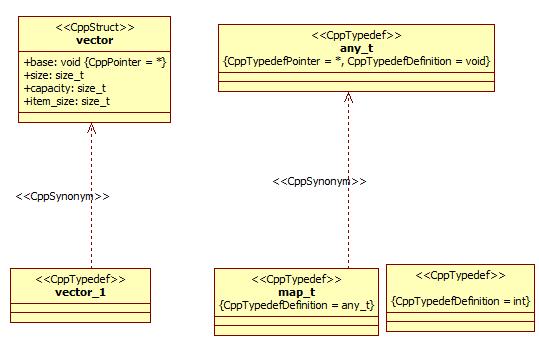

Figure 2.3.4c Class diagram of the vector and hash map used as instance of the Vector and Hash Map

class

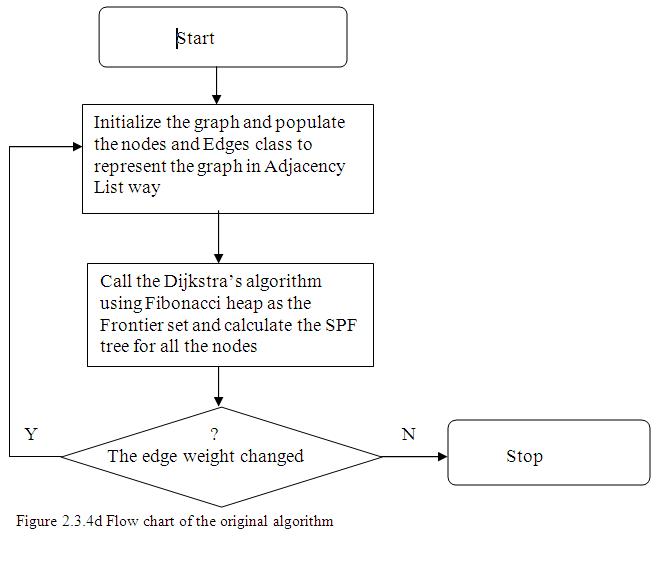

Flow Chart of the original Algorithm:

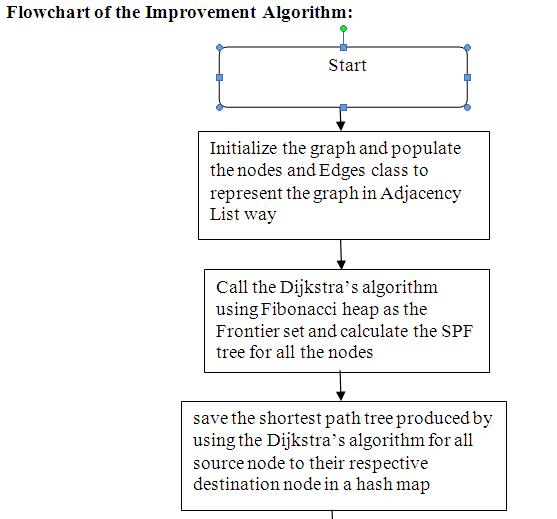

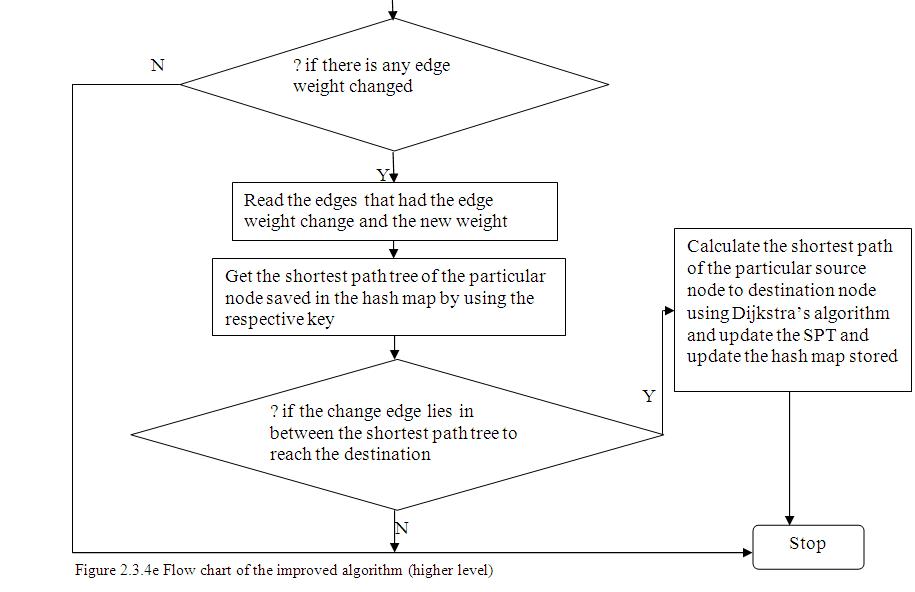

Flowchart of the Improvement Algorithm:

To understand the improvement done by me let’s consider the graph shown in figure 2.3.1.

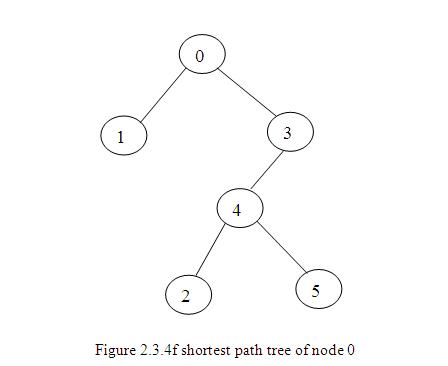

When we apply Dijkstra’s algorithm on any network graph to calculate the shortest path then the algorithm calculates the shortest path for each node (source node) to all its neighbouring nodes. Let us consider the source node as 0 from figure 2.3.1 to calculate the shortest path from it to all its neighbouring nodes. If we plot these shortest paths then we get a tree connected to a root node where the root node is the source node and here the root node will be node 0 and tree is called as the shortest path tree. Similarly in a network, every node calculates its SPT which will contain the shortest paths from the node to all its neighbouring nodes. The figure 2.3.4f is the shortest path tree of node 0 that we get after applying Dijkstra’s algorithm on it.

Implementation (improvement implementation will use the implementation of the base implementation as in section 2.3.3):

I will be extending the implementation as mentioned in section 2.3.3. For the first time run once the complete Dijkstra’s algorithm completely. This will produce the SPF tree for each node. In this example I am taking the example of SPF tree of node 0 only. To store the SPF tree of each node I used Hash map. The hash map will store the branch of the SPF tree of a particular node. For example for node 0 the SPF tree is as in figure 2.3.4f. So this mean the following is the shortest path from node 0 to rest of the neighbouring nodes.

0 -> 1 the shortest path will be 0 -> 1

0 -> 2 the shortest path will be 0 -> 3 -> 4 -> 2

0 -> 3 the shortest path will be 0 ->3

0 -> 4 the shortest path will be 0 ->3 -> 4

0 -> 5 the shortest path will be 0 ->3 -> 4 -> 5.

So the enhanced algorithm will now store the paths of 0 ->1, 0 -> 2 … 0 -> 6 in the hash map. The hash map key will be a char string and will be formulated by adding a string prefix of “spffib” followed by the source and destination node i.e. to store the shortest path of 0 -> 1the key will be spffib01, for 0 -> 2 the key will be the spffib02 etc. The value of the key will be the vector of array of nodes that forms the shortest path, i.e. the value of key “spffib01” will have the vector of array of nodes 0 and 1 and the value of the key “spffib02” will have the vector of array of nodes 0,3,4,2 and so on.

Hash Table is a data structure in which keys are mapped to array positions by a hash function. This table can be searched for an item in O(1) time using a hash function to form an address from the key. The easiest way to conceptualize a hash table is to think of it as an array. When a program stores an element in the array, the elements key is transformed by a hash function that produces array indexes for that array.

Hash table has two main things .First is the hash function and the second is the array i.e. the table of size N.

To store the key value I am using the struct as follows

typedef struct _hashmap_element{

char* key;

int in_use;

any_t data;

} hashmap_element;

A hash map has somemaximum size ,current size and data to hold. So for this I defined it as

typedef struct _hashmap_map{

int table_size;

int size;

hashmap_element *data;

} hashmap_map;

When more than one element tries to occupy the same array position, we have a collision.

Collision is a condition resulting when two or more keys produce the same hash location.

Now, another question arises: can’t we generate the hash function that never produces a collision? The answer is that in some cases it is possible to generate such a function. This is known as a perfect hash function.

Perfect Hash Function is a function which, when applied to all the members of the set of items to be stored in a hash table, produces a unique set of integers within some suitable range. Such function produces no collisions.

Good Hash Function minimizes collisions by spreading the elements uniformly throughout the array.

Here I had used strings as keys . In my hash function the key string is first getting converted to an unsigned long using a crc 32 function . I have borrowed the tables directly from the implementation as in (Brown,2012) , and made some minor changes to the crc32-function (including changing the interface).( For implementation in C ,see static unsigned long crc32_tab[] in Appendix D2).The string to unsigned long is being done in unsigned long crc32().( For implementation in C ,see Appendix D2)

After the key string getting converted to an unsigned long key I am using Robert Jenkins’ 32 bit Mix Function (Wang,1997) and then I am using Knuth’s Multiplicative Method where The key is multiplied by the golden ratio of 2^32 (2654435761) as key = (key >> 3) * 2654435761 to produce a hash . After this I am using modulo arithmetic to transform a key to generate the address as key % m->table_size.Initial table size is being valued to 256. ( For implementation in C ,see Appendix D2).

However, finding a perfect hash function that works for a given data set can be extremely time consuming and very often it is just impossible. Therefore we must live with collisions and learn how to handle them.

To handle collisons I am using linear probing technique.

Linear Probing is one type of rehash (getting a new index) – a very simple one. In case of linear probing, we are looking for an empty spot incrementing the offset by 1 every time. We explore a sequence of location until an empty one is found.Here the maximum chain length is taken to be 8.( For implementation in C ,see the hashmap_hash () in Appendix D2)

And if the hashmap_hash returns MAP_FULL this means that the array is full then I am Doubling the size of the hashmap, and rehashing all the elements. ( For implementation in C, see the hashmap_rehash() in Appendix D2)

A hash map is created by using the hashmap_new () which returns an empty hash map or NULL on failure.

To add a value with a key in hash map int hashmap_put(map_t in, char* key, any_t value) is used which adds an element in the hash map and return 0 for success or -1 if out of memory.

( For implementation in C, see in Appendix D2)

To get an element from the hash mapwhen a key is given we can use hashmap_get(map_t in, char* key, any_t *arg) which gets an element in tha hash map and populate in the arg variable and return 0 on success or -3 if element doesnot exists. ( For implementation in C, see in Appendix D2)

To remove an element from the hash map we can use hashmap_remove(map_t in, char* key)

By providing the map and the key to be removed.It returns 0 for success and -3 if the element doesnot exist. ( For implementation in C, see in Appendix D2)

After using the hash map we should free up the resource by calling the hashmap_free () which frees the hash map. ( For implementation in C, see in Appendix D2)

To get the length of the hash map we can use hashmap_length() which returns the length. ( For implementation in C, see in Appendix D2)

I had used vectors to store the list of nodes forming a shortest path tree from a source to destination node. Vectors are a kind of sequence container. As such, their elements are ordered following a strict linear sequence.Vector containers are implemented as dynamic arrays; Just as regular arrays, vector containers have their elements stored in contiguous storage locations, which means that their elements can be accessed not only using iterators but also using offsets on regular pointers to elements. ( For implementation in C++ ,see Appendix C3)

But unlike regular arrays, storage in vectors is handled automatically, allowing it to be expanded and contracted as needed. Here to implement the vector I am using in this way

typedef struct vector {

void *base; /**< Raw memory for items */

size_t size; /**< The number of inserted items */

size_t capacity; /**< The number of potential items before a resize */

size_t item_size; /**< The number of bytes occupied by an item */

} vector;

To create a vector vector_create(size_t item_size, size_t capacity) is used which takes the size of each elements in bytes and the capacity i.e. the default amount of space needed to create a vector.If the capacity is 0 an empty vector is created. ( For implementation in C, see in Appendix E2) .

To insert elements in the vector vector_insert(vector *v, void *item, size_t index) is used whhich takes the pointer of the vector where the elements needs to be inserted,pointer to the item being inserted the index where the item needs to be inserted.It returns true/ Non zero if the insert succeeded false of 0 otherwise.Here the vector may be resized to make room. The item pointed to is copied by value into the vector. ( For implementation in C, see in Appendix E2)

If you want to clone a vector then vector_clone can be used which returns a pointer to the new vector object or NULL on failure. ( For implementation in C, see in Appendix E2)

To clear a vector object created by vector_new() to an empty state you can use vector_clear.

( For implementation in C, see in Appendix E2)

If you want to resize a vector object to a specific capacity then vector_resize can be used.

( For implementation in C, see in Appendix E2)

To get the size of the vector we can use vector_size. ( For implementation in C, see in Appendix E2)

If we want to retrieve an item stored at specified index then we can use vector_at. It returns the pointer to the item located at the specified index. ( For implementation in C, see in Appendix E2)

To remove an item at a specified index then we can use vector_remove. All items following the found value will be shifted to fill in the hole. ( For implementation in C, see in Appendix E2)

You can use vector_remove_if to remove an element which matches the predicate or a specific condition. Here also all items following the found value may be shifted to fill in the hole.

( For implementation in C, see in Appendix E2)

To search for a particular value in the vector youu can use vector_find ,which will return the index of the found valueelse -1 if item not found.Here a byte-by-byte comparison is used to test equality. ( For implementation in C, see in Appendix E2)

To search for a particular item when a condition is matched specified in the predicate then vector_find_if can be used.Here too the index of the found value is returned , or (size_t)-1 if not found. ( For implementation in C, see in Appendix E2)

When the vector is full and the insertion may cause an exception then the size is automatically adjusted by using a static auto_capacity function. If the vector can grow it returns true or non

zero else false or zero otherwise.If the capacity while creation was zero then the we do automatic increase of capacity by 4 and if the capacity is mentioned while creation then when the vector is full and with the subsequent insertionof an item would increase the capacity by 50% of the specified capacity. ( For implementation in C, see in Appendix E2)

After repeated testing on the same graph by changing weights I found that, if the edge weights belong to the SPT then the nodes after the new edge weight node gets affected and rest all remains the same. So calculating the SPT for all the nodes is unnecessary. To understand consider the case when the edge weight between node 1 and node 3 is removed in figure 2.3.1. Clearly in the SPF tree of figure 2.3.4f the edge weight between node 1 and node 3 does not have any role so the normal SPF algorithm would have calculated the SPF to all the nodes from the source node 0. But this is inefficient because the node 1 and node 3 does not belong to the SPF tree of node 0 and thus removing the edge between node 1 and node 3 will not have any changes in the SPF tree of node 0, so we need to avoid the use of SPF run again on node 0. So we need to device an algorithm which will make these checks before re-running the SPF algorithm whenever there is any edge weight change happens in a network graph. This extension to the Dijkstra’s algorithm is best explained using an example. So let us consider the impact of the following edge weight changes on graph in the figure 2.3.1. Let us consider S as the source of the calculation of SPF algorithm and is the root of the SPF tree.

First let us consider the case when the cost of the communication link from node A to node B increases/decreases. There are two possibilities to check for the shortest path calculation.

- If the change edge is not in the SPF tree means the shortest path from S to B does not pass through A then no change in the SPF tree is required and since the edge was not the pair of any shortest path prior to the change, so it will certainly not be used when the cost increases.

- If the link is in the SPF tree. Then the cost to reach B from source S increases as well as the cost to reach any other node would also increase whose route from S passes through B. So the nodes rooted at B will be the candidates for changed cost and nodes not in the sub tree will not be calculated.

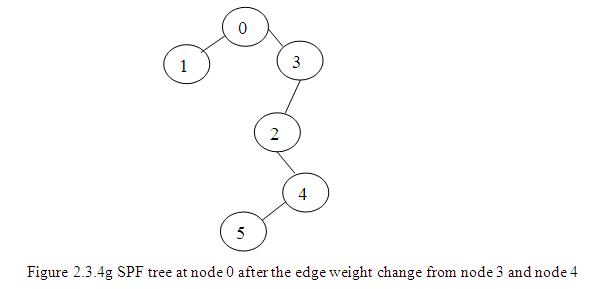

For example in my implementation suppose the edge weight between node 3 and 4 increases/decreases from 1 to 5 then since the edge 3-4 is in the SPF tree so the SPF tree will be affected. So now what all shortest paths will get affected? Clearly the shortest path which has the edge 3-4 in the route to reach a destination will be affected. So in the figure 2.3.4a the shortest path from 0 -> 4, 0 -> 2 and 0 -> 5 will be affected. In my algorithm I am checking whether the changed edge weight would affect the SPF tree or not. This checking I am doing it in the function CheckAndCallDijikstraIfNeeded () (For implementation in C++, see Appendix 2.3.4b). In this function I am checking if the changed nodes are present in the shortest path of each node that I had stored in the hash map. If the changed nodes are present in the path then I am calculating the shortest path from source to the given node only and then changing the hash map stored.

In our example the edge connecting node 3 and node 4 is increased from 1 to 5 so CheckAndCallDijikstraIfNeeded() will first get the hash map stored in “spffib01” since it is not present then it will check the shortest path present in “spffib02”. In this the edge 3-4 lies in the route to reach node 2 from node 0 so I am calculating the shortest path from node 0 to node 2 and updating the hash map. In this way if we move then the Dijkstra’s algorithm will be applied only to calculate the shortest path to reach node 2, node 4 and node 5 instead of calculating the shortest path to reach all the nodes from node 0. Also CheckAndCallDijikstraIfNeeded() checks

if in a shortest path by getting the value of a key the last node is the changed node then still there is no need to calculate the shortest path ,just increase the weight of the edge by the new weight in the SPF tree stored in the hash map. i.e. for example if there is a change in edge weight from node 0 to node 1 then since node 1 is the last node and no other nodes lies after it in the SPF tree so just change the weight of the edge connecting the node 0 and node 1 in the SPF tree with the new edge weight.So now the SPF tree would be as in figure 2.3.4g

Similar check I am doing when the edge weight is decreases.

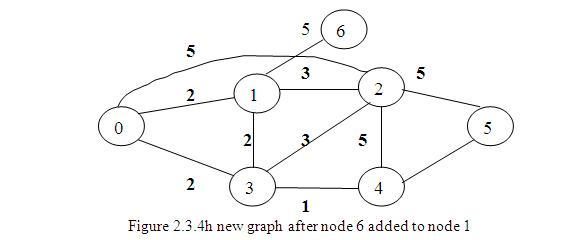

Second, let us consider the case when there is the addition and deletion of the new nodes in the graph.

Consider the case where the edge between node 1 and node 3 is removed. Since the edge does not lie in the SPF tree of node 0 as in the figure 2.3.4 a, so there will be no Dijkstra’s algorithm re run for the calculation again and hence there will be no change of SPF tree.

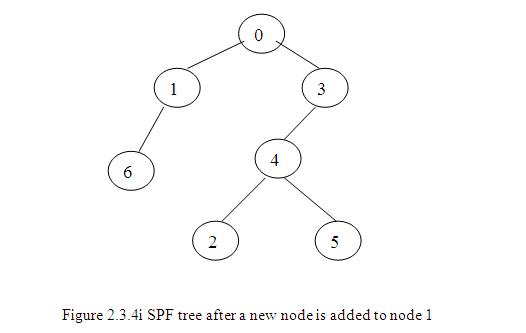

Consider the case when a new node 6 is added to node 1

Since from SPF tree of node 0 in figures 2.3.4f it is clear that the edge 1-6 does not lie in the SPF tree. So just add the node 6 under node 1 in the SPF tree and no need of entire Dijkstra’s algorithm calculation and we get the new SPF tree as in figure 2.3.4i

2.4 Conclusion and alternative suggestion for the solution

In conclusion, the basic SPF algorithm requires an array to which nodes have been visited, a priority queue to choose which node to visit next and a structure to store the graph. So we found that the Dijkstra’s algorithm performs much better when the graph is used as an adjacency list than when the graph is implemented using adjacency matrix. We also found that when we use Fibonacci heap as a Frontier set to store the nodes then the Dijkstra’s algorithm performance increases to an O(nlogn + m) where n = number of nodes and m = number of edges running time from O ( ( n+m)log n) which is O (m log n) if all vertices are reachable from the source.

Also the SPF algorithm without any modification takes a running time that is directly proportional to the number of nodes where as the running time of the proposed solution is directly proportional to the size of the shortest path tree or sub tree. Hence because of this it performs much better than using of original algorithm to entire nodes in graph when there is an edge weight change.

Future Work

Instead of using hash map and vectors to hold the shortest path routes from source to destination, it could have been more convenient if we could have used Hash map and tree i.e. the SPT and a 2D matrix to quickly detect if there exist any connection between two nodes. The hash map would store for a given node as key and the value as a tree i.e. the SPT. Suppose there is a node change then retrieve the SPT from the hash map using the key. Check if the edge lies in between the route to reach a destination by using DFS (Depth First Search).If it is present then compute the shortest path from the source to only that destination that gets affected by the change.Another solution is to divide the graph into various regions and build the SPT of these regions in the hash map instead of creating SPT for each node. So whenever there is any edge weight change then check if the edge lies in any route of all the regions and rebuild the SPT again.Further studies can be based on applying good design pattern for more optimized results. Also we can study more on modify, remove and addition features of node in the graph. Also we can study on storing the SPT by using AVL trees, Radix and other specialised data structures and check their performance with that of the performance of using hash maps. I has used hash map and also investigated on use of Binary search trees using linked list and binary heaps to store the shortest path tree. However there are other data structures like Dial’s Data structure (Dial et al. 1969 ) and Radix Heap (Ahuja 1993) .

Performance Testing and Analysis

To measure the performance of an algorithm we need to find its running time of it to completely run the algorithm to the end by giving various inputs of different size. So we must measure the performance by plotting the graph using n vs. running time method. Here n is the different size of the graphs. I will measure the performance taking graphs of varied sizes spanning from 10 edges to 80 edges.

When timing code to identify performance bottlenecks, we want to use the highest resolution timer the system has to offer. So here I will be using the windows API QueryPerformanceCounter (). It requires “windows.h” header file

Its signature is

BOOL WINAPI QueryPerformanceCounter

(

__out LARGE_INTEGER *lpPerformanceCount

);

Parameters

lpPerformanceCount [out]

Type: LARGE_INTEGER*

A pointer to a variable that receives the current performance-counter value, in counts.

Return value

Type: BOOL

If the function succeeds, the return value is nonzero. If the function fails, the return value is zero.

On a multiprocessor computer, it should not matter which processor is called. However, you can get different results on different processors due to bugs in the basic input/output system (BIOS) or the hardware abstraction layer (HAL).

Several timers of differing accuracy are offered by the operating system:

Function Units Resolution

——————————————————————————————————————

Now, Time, Timer seconds 1 second

GetTickCount milliseconds approx. 10 ms

TimeGetTime milliseconds approx. 10 ms

QueryPerformanceCounter QueryPerformanceFrequency same

I am using it as follows. I had placed the API to initialize it just at the beginning of the algorithm

QueryPerformanceCounter(&t0Old);

QueryPerformanceFrequency(&freqOld);

At the termination of the algorithm I am calculating the elapsed time as follows

QueryPerformanceCounter(&tFOld);

tDiffOld.QuadPart = tFOld.QuadPart – t0Old.QuadPart;

elapsedTimeold = elapsedTimeold + (tDiffOld.QuadPart / (double) freqOld.QuadPart);

resolutionOld = 1.0 / (double) freqOld.QuadPart;

The elapsed time will give us the exact time of the algorithm to execute to completion in seconds.

In Linux machines we can use gettimeofday () api . We can use this as follows. For this let us declare a function to calculate the start and end time. Start time is the time when the program counter enters the algorithm and end time is when the algorithm completes its execution. So the elapsed time is the time obtained after subtracting the start time from the end time and elapsed time is the time of execution of the algorithm.

__int64 GetTimeMs64(){

struct timeval tv;

gettimeofday(&tv, NULL);

uint64 ret = tv.tv_usec;

/* Convert from micro seconds (10^-6) to milliseconds (10^-3) */

ret /= 1000;

/* Adds the seconds (10^0) after converting them to milliseconds (10^-3) */

ret += (tv.tv_sec * 1000);

return ret;

}

To use GetTimeMs64() call at the entry of the algorithm which will give us the start time and again call GetTimeMs64() at the end of the execution of the algorithm and this will give the end time so the elapsed time will be the endtime-starttime. This will give us the time in milliseconds. For small size of n the elapsed time may be 0.000 milliseconds so to get a valid time call the algorithm for atleast 1000 to 1000000 times and then divide the elapsed time by the total number of times yoo had called the algorithm.

Since QueryPerformanceCounter is a high resolution timer and gives the accurate result at single shot that is even for small inputs it does not require the algorithm to call multiple times to get an approximate time of execution. So I am using QueryPerformanceCounter() API to calculate the execution time.

Analysis of Algorithms and Performance Plot

Performance Plot:

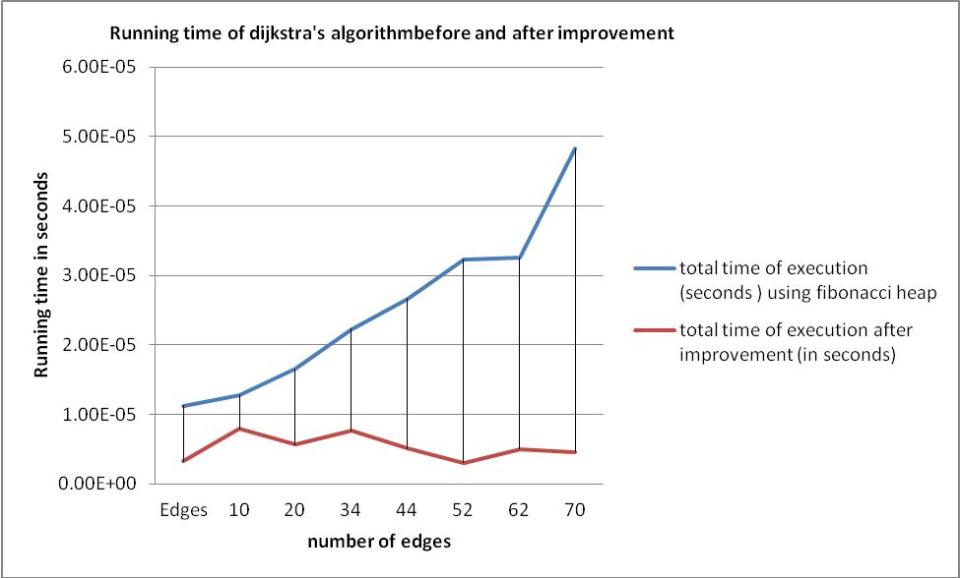

| No. Of nodes | Edges | total time of execution (seconds ) using Fibonacci heap | total time of execution after improvement (in seconds) |

| 5 | 10 | 1.13E-05 | 3.21E-06 |

| 6 | 20 | 1.28E-05 | 7.91E-06 |

| 8 | 34 | 1.66E-05 | 5.63E-06 |

| 11 | 44 | 2.23E-05 | 7.64E-06 |

| 14 | 52 | 2.67E-05 | 5.07E-06 |

| 16 | 62 | 3.24E-05 | 3.00E-06 |

| 16 | 70 | 3.26E-05 | 5.00E-06 |

| 22 | 80 | 4.83E-05 | 4.60E-06 |

I had calculated and noted down the execution of the algorithm on various size of the graphs for the original version and also for the improved version using the QueryPerformanceCounter API and the values are in the table 3.5a

So with these values the n vs. running time plot is in figure 3.5b

Figure 3.5b running time analysis of Dijkstra’s algorithm and with the modified algorithm

Observation from the figure 3.4a and figure 3.4b it was found that the running time of Dijkstra’s algorithm without any modification is directly proportional to the total number of nodes and edges of the entire graph. However the running time of the modified algorithm is directly proportional to the nodes affected by the edge weight changes i.e. the size of the sub tree which leads to the better performance. Whenever an edge weight change occurs the algorithm will try to isolate (or identify) the group of nodes which are affected by the change. In some cases the topological change will affect a small number of nodes, and hence the running time of the improved algorithm to update the shortest paths to these nodes will be minimal. We could find that there is approximately 80% gain in running time with the improved algorithm that works on the SPT instead of the whole graph when there is an edge weight change.

Analysis of the Algorithm:

Let G be a directed graph, one of whose vertices is distinguished as the source s, and each of whose edges (v, w) has a nonnegative length I(v, w). The length of a path in G is the sum of its edge lengths; the distance from a vertex v to a vertex w is the minimum length of a path from v to w. A minimum-length path from v to w is called a shortest path. The single source shortest path problem is that of finding a shortest path from s to v for every vertex v in G. We shall denote the number of vertices in G by n and the number of edges by m. We assume that there is a path from s to any other vertex; thus m ³ n – 1. Dijkstra’s algorithm solves the shortest path problem using a tentative distance function d from vertices to real numbers with the following properties:

(1) For any vertex v such that d (v) is finite, there is a path from s to v of length d (v);

(2) When the algorithm terminates, d (v) is the distance from s to v.

Initially d(s) = 0 and d (v) = ¥ for v # s. During the running of the algorithm, each vertex is in one of three states: unlabeled, labelled, or scanned. Initially s is labelled and all other vertices are unlabeled. The algorithm consists of repeating the following step until there are no labelled vertices (every vertex is scanned):

Scan. Select a labelled vertex v with d (v) minimum. Convert v from labelled to scanned, for each edge (v, w) such that d (v) + l (v, w) < d (w), replace d by d (v) + l (v, w) and make w labelled.

The non-negativity of the edge lengths implies that a vertex, once scanned, can never become labelled and further that the algorithm computes distances correctly. To implement Dijkstra’s algorithm, we used a heap to store the set of labelled vertices. The tentative distance of a vertex is its key.

Initialization requires one make heap and one insert operation. Each scanning step requires one delete min operation. In addition, for each edge (v, w) such that d(v) + I(v, w) < d(w), we need either an insert operation (if d(w) = ¥) or a decrease key operation (if d(w) < ¥). Thus there is one make heap operation, n insert and n delete min operations, and at most m decrease key operations. The maximum heap size is n – 1. We used a Fibonacci heap, so the total time for heap operations is O (n logn + m). The time for other tasks is O(n + m). Thus we obtain an O(nlogn + m) running time for Dijkstra’s algorithm. We computed the shortest paths as well as the distances so for each vertex v, we maintain a tentative predecessor pred (v) that immediately precedes v on a tentative shortest path from s to v. When replacing d(w) by d(v) + I(v, w) in a scanning step, we define pred = v. Once the algorithm terminates, we can find a shortest path from s to v for any vertex v by following predecessor pointers from v back to s. Augmenting the algorithm to maintain predecessors adds only O (m) to the running time.

Storing the shortest path tree in hash table is a 0(1) operation and also finding the stored SPT will take O (1) time. After retrieving the tree finding the edge if it can hamper the shortest path route is a O (n sub tree) operation and here the n is the number of nodes in the shortest path which is less than the actual number of nodes in the graph. And updating the SPT is also an O (1) operation. So if we see in the improved version there is a major jump in the execution time from O (n log n +m) to

O (n sub tree). Hence we can conclude that the improved version is really a better way to implement in dynamic networks to compute the shortest path.

References:

[1] Ahuja, R., et al., (1 993) Network Flows: Theory, Algorithms and Applications, Prentice Hall

[2] Bang, Jensen., Jorgen., Gregory, Gutin. “Section 2.3.4: The Bellman-Ford-Moore algorithm”. (First edn) Digraphs: Theory, Algorithms and Applications

[3] Brass,P.(2008)AdvancedDataStructures.CambridgeandNewYork:CambridgeUniversityPress

[4] Brown,S.G.crc32.c [online] available from <http://www.opensource.apple.com/source/xnu/xnu-1456.1.26/bsd/libkern/crc32.c > [ 1 July 2012]

[5] Cormen,T.H.,Leiserson,C.E.,Rivest,R.L.,andStein,C.(2009)IntroductiontoAlgorithms,3rd edn,NewDelhi:PrenticeHallofIndia

[6] Dasgupta,S., Papadimitriou,C.H.,Vazirani,U.V.,C(2006) Algorithms,July 18,2006

[7] Dial, R.B . et al.,( November 1969) Algorithm 360: Shortest-Path Forest with Topological Ordering. Communications of the ACM, (V 12), p. 11, 632-633.

[8] Fredman,L.M., Tarjan,R.E. (July 1987) Fibonacci Heaps and Their Uses in Improved Network Optimization Algorithms. Journal of the Association for Computing Machinery,(V34),596-615

[9] Gupta, P., Aggarwal, V.,Varshney,M. Design and Analysis of Algorithms, Eastern Economy

edn

[10] Knuth,D.E.(1998) The Art of Computer Programming, Volume3:SortingandSearching ,2nd edn , NewDelhi :Addison-WesleyIndianReprint

[11] Skiena,S.S. The Algorithm Design Manual, 2nd edn,Springer Science and Business Media

[12] Tanenbaum,S.A.,Computer Networks,3rd edn,Pearson

[13] Wang,T.(Jan 1997) Integer Hash Function, Version 3.1, last update Mar 2007

Appendix

A: Implementation of Dijkstras Algorithm in C When The Graph is Implemented Using Adjacency Matrix

A1 da_char.h

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <time.h>

#ifdef WIN32

#include <Windows.h>

#else

#include <sys/time.h>

#include <ctime.h>

#endif

#define MALLOC(t,n) (t *) malloc( n*sizeof(t) )

#define MALLOC2D(t,n) (t **) malloc(n*sizeof(t) )

#define CHECKPTR(p) if (!p) Error(“Out of memory”)

#define MAX_INT 999999999

int vertexNum;

__int64 GetTimeMs64();

void Error (char *str);

void VertexFileBuiltStruct( char *str);

void FindTheRoutes(int source);

void writeToOutput(char *outputName, int source);

A2 da_char.c

#include”da_char.h”

extern int vertexNum;

extern int **PATHS = (int**) NULL;

extern char **ROUTE = (char**) NULL;

extern int *SHORTEST_PATH = (int*) NULL;

extern int *VISITED = (int*) NULL;

/* Returns the amount of milliseconds elapsed since the UNIX epoch. Works on both

* windows and linux. */

__int64 GetTimeMs64()

{

#ifdef WIN32

/* Windows */

FILETIME ft;

LARGE_INTEGER li;

__int64 ret;

/* Get the amount of 100 nano seconds intervals elapsed since January 1, 1601 (UTC) and copy it to a LARGE_INTEGER structure. */

GetSystemTimeAsFileTime(&ft);

li.LowPart = ft.dwLowDateTime;

li.HighPart = ft.dwHighDateTime;

ret = li.QuadPart;

ret -= 116444736000000000LL; /* Convert from file time to UNIX epoch time. */

ret /= 10000; /* From 100 nano seconds (10^-7) to 1 millisecond (10^-3) intervals */ return ret;

#else

/* Linux */

struct timeval tv;

gettimeofday(&tv, NULL);

uint64 ret = tv.tv_usec;

/* Convert from micro seconds (10^-6) to milliseconds (10^-3) */

ret /= 1000;

/* Adds the seconds (10^0) after converting them to milliseconds (10^-3) */

ret += (tv.tv_sec * 1000);

return ret;

#endif

}

//////////////////////////////////////

//

// if any error occurs we can write it

//

//////////////////////////////////////

void Error (char *str)

{

printf(“\nERROR: %s\n”, str);

return;

}

//////////////////////////////////////

//

// Read the file and build the dynamic structure

//

//////////////////////////////////////

void VertexFileBuiltStruct( char *str)

{

FILE *fp_vertex;

int i,j;

int num;

fp_vertex = fopen(str, “r” );

rewind ( fp_vertex );

fscanf ( fp_vertex, “%d”, &vertexNum);

//allocate space dynamicly and check for errors

SHORTEST_PATH = MALLOC( int , vertexNum );

CHECKPTR( SHORTEST_PATH );

VISITED = MALLOC( int , vertexNum );

CHECKPTR( VISITED );

PATHS = MALLOC2D( int , vertexNum );

CHECKPTR( PATHS );

ROUTE = MALLOC2D( char , vertexNum );

for(i=0; i<vertexNum; i++)

{

PATHS[i] = MALLOC( int , vertexNum );

CHECKPTR( PATHS[i] );

ROUTE[i] = MALLOC( char , vertexNum );

CHECKPTR( ROUTE[i] );

//our routes are now empty

strcpy(ROUTE[i], “”);

//say that Y is empty

VISITED[i] = 0;

//set the paths as very long

SHORTEST_PATH[i] = MAX_INT;

}

//read the distances

for(i=0; i<vertexNum; i++)

{

for(j=0; j<vertexNum; j++)

{

fscanf ( fp_vertex, “%d”, &num);

PATHS[i][j] = num;

}

}

fclose( fp_vertex );

}

//////////////////////////////////////

//

// find the shortest paths for the beginnig vertex

// and store the routines that on which vertex can we go..

//

//////////////////////////////////////

void FindTheRoutes(int source)

{

char *ptr;

int i, j;

int my_vertex;

int ek;

SHORTEST_PATH

= 0;

my_vertex = source;

i=0;

while( i++ < vertexNum )

{

//we will decide to which vertex is closer that is not in Y (not visited)

for(j=0; j<vertexNum; j++)

{

//if we find a vertex that is not Y, lets work on it

if( VISITED[j] == 0 )

{

//if there is a connection with the vertexes

if( PATHS[my_vertex][j] != 0 )

{

//decide the shortest paths

if( SHORTEST_PATH[j] > PATHS[my_vertex][j] +

SHORTEST_PATH[my_vertex] )

{

//we found more shortest way.. change the old one

SHORTEST_PATH[j] = PATHS[my_vertex][j] +

SHORTEST_PATH[my_vertex];

//and change the route

strcpy(ROUTE[j], ROUTE[my_vertex]);

ptr=ROUTE[j];

while( *ptr != ” )

{

++ptr;

}

*ptr = ‘0’+ my_vertex;

++ptr;

*ptr = ‘-‘;

++ptr;

*ptr = ”;

}

}

}

}

ek = MAX_INT;

for( j=1; j<vertexNum; j++)

{

if( VISITED[j] == 0 )

{

if( SHORTEST_PATH[j] < ek )

{

ek = SHORTEST_PATH[j];

my_vertex = j;

}

}

}

VISITED[ my_vertex ] = 1;

}

//add the last vertexes to routines…

//if they are empty write “No connection”

for( i=0; i<vertexNum; i++)

{

if( ROUTE[i][0] != ” || source == i)

{

ptr=ROUTE[i];

while( *ptr != ”)

{

++ptr;

}

*ptr = ‘0’+ i;

++ptr;

*ptr = ”;

}

else

{

strcpy(ROUTE[i], “No path exists”);

}

}

}

//////////////////////////////////////

//

// writes the results to output file

//

//////////////////////////////////////

void writeToOutput(char *outputName, int source)

{

FILE *fpOut;

int j,k;

int len;

int emptySpace = 10;

fpOut = fopen( outputName , “a” );

rewind ( fpOut );

fprintf(fpOut, “Nodes Path Distance \n”);

fprintf(fpOut, “——————————\n”);

for( j=0; j<vertexNum; j++)

{

if( j != source)

{

fprintf(fpOut, “%d – %d “,source, j); //write the nodes

len = strlen( ROUTE[j] );

fprintf(fpOut, “%s”, ROUTE[j] );

for(k=0; k<emptySpace – len; k++)

fprintf(fpOut,” “);

fprintf(fpOut, “%d\n”, SHORTEST_PATH[j] );

}

}

fclose( fpOut );

}

A 3 dijikstrasTest.c

#include”da_char.h”

//////////////////////////////////////

//

// the main part of the program

//

//////////////////////////////////////

int main()

{

int i,j;

int beginningV;

FILE *fpOut;

double msec;

double elapsedTimeold,resolutionOld;

__int64 starttime,endtime,totaltime,starttime1,endtime1,totaltime1;

LARGE_INTEGER freqNew,freqOld;

LARGE_INTEGER t0New, tFNew, tDiffNew,t0Old, tFOld, tDiffOld;

VertexFileBuiltStruct(“input1.txt”);

FILE *fp_vertex;

totaltime1 = 0;

fp_vertex = fopen(“input20edges.txt”, “r” );

rewind ( fp_vertex );

fscanf ( fp_vertex, “%d”, &vertexNum);

fclose(fp_vertex);

elapsedTimeold = 0.0;

for(i = 0; i<vertexNum;i++)

{

VertexFileBuiltStruct(“input20edges.txt”);

QueryPerformanceCounter(&t0Old);

QueryPerformanceFrequency(&freqOld);

FindTheRoutes(i);

QueryPerformanceCounter(&tFOld);

tDiffOld.QuadPart = tFOld.QuadPart – t0Old.QuadPart;

elapsedTimeold = elapsedTimeold + (tDiffOld.QuadPart /

(double) freqOld.QuadPart);

resolutionOld = 1.0 / (double) freqOld.QuadPart;

writeToOutput(“Output20edges.txt”, i);

}

msec = elapsedTimeold;

fpOut = fopen(“Output10edges.txt” , “a” );

rewind ( fpOut );

fprintf(fpOut, “total time take to build shortest path for all the nodes

is %lf milliseconds \n”,msec);

fclose(fpOut);

return 0;

}

B: Implementation of Dijkstras Algorithm in C When The Graph is Implemented Using Adjacency List

B1 da_adjlist.c

/*Dijkstra’s algorith on a graph represented using adjacency list*/

#define INFINITY 9999

#include <stdio.h>

#include<stdlib.h>

#include <time.h>

#ifdef WIN32

#include <Windows.h>

#else

#include <sys/time.h>

#include <ctime.h>

#endif

#define MAX 100

__int64 GetTimeMs64()

{

//#ifdef WIN32

/* Windows */

FILETIME ft;

LARGE_INTEGER li;

__int64 ret;

/* Get the amount of 100 nano seconds intervals elapsed since January 1, 1601 (UTC)

and copy it to a LARGE_INTEGER structure. */

GetSystemTimeAsFileTime(&ft);

li.LowPart = ft.dwLowDateTime;

li.HighPart = ft.dwHighDateTime;

ret = li.QuadPart;

ret -= 116444736000000000LL; /* Convert from file time to UNIX epoch time. */

ret /= 10000; /* From 100 nano seconds (10^-7) to 1 millisecond (10^-3) intervals */

return ret;

#else

/* Linux */

struct timeval tv;

gettimeofday(&tv, NULL);

uint64 ret = tv.tv_usec;

/* Convert from micro seconds (10^-6) to milliseconds (10^-3) */

ret /= 1000;

/* Adds the seconds (10^0) after converting them to milliseconds (10^-3) */

ret += (tv.tv_sec * 1000);

return ret;

#endif

}

typedef struct node

{

struct node *next;

int vertex,weight;

}node;

node *G[10];//adjacency list

int n;// Number of vertices

void readgraph();

void insert(int vi,int vj,int w);

void dijkstra( int startnode);

int i,j;

__int64 starttime,endtime,totaltime,starttime1,endtime1,totaltime1;

int main()

{

int u;

double msec;

LARGE_INTEGER freqNew,freqOld;

LARGE_INTEGER t0New, tFNew, tDiffNew,t0Old, tFOld, tDiffOld;

double elapsedTimeold,resolutionOld;

readgraph(“input20edges.txt”);

elapsedTimeold=0.0;

/*printf(“\nEnter the starting node : “);

scanf(“%d”,&u);*/

//starttime = GetTimeMs64();

for(i = 0;i<n;i++)

{

QueryPerformanceCounter(&t0Old);

QueryPerformanceFrequency(&freqOld);

dijkstra(i);

QueryPerformanceCounter(&tFOld);

tDiffOld.QuadPart = tFOld.QuadPart – t0Old.QuadPart;

elapsedTimeold = elapsedTimeold + (tDiffOld.QuadPart / (double) freqOld.QuadPart);

resolutionOld = 1.0 / (double) freqOld.QuadPart;

printf(“\n”);

}

msec = elapsedTimeold;//(double)(totaltime/1000.00);

printf(“\n total time taken by the algorithm is %lf”,msec);

getch();

}

void dijkstra( int startnode)

{

int distance[MAX],pred[MAX];

int visited[MAX],count,mindistance,nextnode,i,j;

/*pred[] stores the predecessor of each node

count gives the number of nodes seen so far*/

/* A node picked up for expansion is marked as visited[node no.]=1*/

//initialize

node *p;

for(i=0;i<n;i++)

{

distance[i]=INFINITY;

pred[i]=startnode;visited[i]=0;

}

distance[startnode]=0;

count=0;

while(count<n-1)

{

mindistance=INFINITY ;

// nextnode is the node at minimum distance

for(i=0;i<n;i++)

if(distance[i] < mindistance && !visited[i])

{

mindistance=distance[i];

nextnode=i;

}

//check if a better path exist through nextnode

visited[nextnode]=1;

for(p=G[nextnode];p!=NULL;p=p->next)

if(!visited[p->vertex])

if(mindistance+p->weight<distance[p->vertex])

{

distance[p->vertex]=mindistance+p->weight;

pred[p->vertex]=nextnode;

}

count++;

}

//print the path and distance of each node

for(i=0;i<n;i++)//Uncomment the code to check the correctness of the algorithm and //comment it to calculate the running time

if(i!=startnode)

{

printf(“\n Distance of %d = %d “,i,distance[i]);

printf(” Path = %d “,i);

j=i;

do

{

j=pred[j];

printf(“<- %d “,j);

}while(j!=startnode);

}

}

void readgraph(char *str)

{

int i,j;

int adj[100][100];

FILE *fp_vertex;

fp_vertex = fopen(str, “r” );

rewind ( fp_vertex );

fscanf ( fp_vertex, “%d”, &n);

for(i=0;i<n;i++)

{

for(j=0;j<n;j++)

{

fscanf ( fp_vertex, “%d”, &adj[i][j]);

}

}

//initialise G[] with NULL

for(i=0;i<n;i++)

G[i]=NULL;

for(i=0;i<n;i++) //create adjacency list

{

for(j=0;j<n;j++)

{

if(adj[i][j]!=0)

insert(i,j,adj[i][j]);

}

if(i==n)

break;

}

}

void insert(int vi,int vj,int w)

{

node *p,*q;

//acquire memory for the new node

q=(node *)malloc(sizeof(node));

q->vertex=vj;

q->next=NULL;

q->weight=w;

//insert the node in the linked list for the vertex no. vi

if(G[vi]==NULL)

G[vi]=q;

else

{

// go to the end of linked list

p=G[vi];

while(p->next!=NULL)

p=p->next;

p->next=q;

}

}

C: Implementation of Dijkstras Algorithm in C++ When The Graph is Implemented Using Fibonacci Heap and Adjacency List

C1 Dijkstra.h

#include <stdlib.h>

#include <fstream>

#include <string.h>

#include <conio.h>

#include “vector.h”

#include “hashmap.h”

#include “FibonaciiHeap.h”

#include <time.h>

#ifdef WIN32

#include <Windows.h>

#else

#include <sys/time.h>

#include <ctime.h>

#endif

#define KEY_MAX_LENGTH (1024)

#define KEY_PREFIX (“spffib”)

#define KEY_COUNT (1024*1024)

typedef struct data_struct_s

{

char key_string[KEY_MAX_LENGTH];

vector *edges;

int totalDistance;

int vertexCount;

} data_struct_t;

typedef struct data_key_map_index

{

int index;

data_struct_t *destinationmap;

} data_key_map_t[1024*1024];

void LoadTheShortestPath(int source,int destination);

vector *ReadTheFileAndPopulateTheGraph(char* filename);

void Dijikstra(vector *vertices1,int destination);

void SaveTheSPTUsingHashMapAndVector(vector *vertices1,int destination);

//struct *SaveTheSPTUsingHashMapAndVector(vector *vertices1,int destination,int source);

void NewDijikstra(vector *vertices1,int lastElement,char key_string[]);

vector *ReadAndPopulateTheGraphWithChangedWeight(char* filename);

void CheckAndCallDijikstraIfNeeded (vector *vertices1);

void CallNewDijikstraAndUpdatespftree(vector * vertices1,int lastElement,char *keyString);

void UpdateTheSPTTree(vector *vertices1,char *key_string,int destination);

double getOldTime();

double getNewTime();

long getVertexNumber();

__int64 GetTimeMs64();

C2 FibonaciiHeap.h

//====================================================================

//

// A network optimization algorithm using Fibonacci Heap

//

//

// ====================================================================

#ifndef _FIBONACCI_HEAP_H_

#define _FIBONACCI_HEAP_H_

#include <stdio.h>

#include <vector>

enum State

{

LABELED, UNLABELED, SCANNED

};

class Node;

class Edge

{

private:

public:

Node* tail;

Node* head;

double length;

double delta;

Edge(Node* tail, Node* head, double length);

};

class Node

{

private:

public:

Node * parent;

Node * leftSibling, * rightSibling;

Node * children;

Node * pred;

Node(int data, double key);

Node();

int data;

double key;

int rank;

std::vector<Edge*> incomingEdges;

std::vector<Edge*> outgoingEdges;

State state;

bool addChild(Node * node);

bool addSibling(Node * node);

bool remove();

Node* leftMostSibling();

Node* rightMostSibling();

void addIncomingEdge(Edge * edge);

void addOutgoingEdge(Edge * edge);

};

class FibonacciHeap

{

private:

Node* rootListByRank[100];

bool link(Node* root);

Node * minRoot;

public:

FibonacciHeap();

FibonacciHeap(Node *root);

~FibonacciHeap();

bool isEmpty();

bool insertVertex(Node * node);

void decreaseKey(double delta, Node* vertex);

Node* findMin();

Node* deleteMin();

};

#endif

C3 Dijkstra.cpp

#include <time.h>

#include “vector.h”

#include “Dijkstra.h”

#include “hashmap.h”

#include <Windows.h>

typedef unsigned long long timestamp_t;

long vertexNumber;

map_t mymap = hashmap_new();

int changenode1,changenode2;

int newUpdateCount=0;

int oldweight;

int changeWeight;

char *newFilename;

int newUpdateVertexCount = 0;

int newNodesWorkedUpon[100];

bool changeFoundinTheweightsOfRouters = false;

int FirstTimeEntryOfTheFunction = 0;

LARGE_INTEGER freqNew,freqOld;

LARGE_INTEGER t0New, tFNew, tDiffNew,t0Old, tFOld, tDiffOld;

double elapsedTimeold,elapsedTimeNew,resolutionOld,resolutionNew;

double msec;

__int64 starttime,endtime,totaltime,starttime1,endtime1,totaltime1;

/* Returns the amount of milliseconds elapsed since the UNIX epoch. Works on both

* windows and linux. */

__int64 GetTimeMs64()

{

#ifdef WIN32

/* Windows */

FILETIME ft;

LARGE_INTEGER li;

__int64 ret;

/* Get the amount of 100 nano seconds intervals elapsed since January 1, 1601 (UTC)

and copy it to a LARGE_INTEGER structure. */

GetSystemTimeAsFileTime(&ft);

li.LowPart = ft.dwLowDateTime;

li.HighPart = ft.dwHighDateTime;

ret = li.QuadPart;

ret -= 116444736000000000LL; /* Convert from file time to UNIX epoch time. */

ret /= 10000; /* From 100 nano seconds (10^-7) to 1 millisecond (10^-3) intervals */

return ret;

#else

/* Linux */

struct timeval tv;

gettimeofday(&tv, NULL);

uint64 ret = tv.tv_usec;

/* Convert from micro seconds (10^-6) to milliseconds (10^-3) */

ret /= 1000;

/* Adds the seconds (10^0) after converting them to milliseconds (10^-3) */

ret += (tv.tv_sec * 1000);

return ret;

#endif

}

static timestamp_t get_timestamp ()//for unix

{

struct timeval now;

gettimeofday (&now, NULL);

return now.tv_usec + (timestamp_t)now.tv_sec * 1000000;

}

#endif

long getVertexNumber()

{

return vertexNumber;

}

vector *ReadTheFileAndPopulateTheGraph(char* filename)

{

long n;

int edge_count = 0,indexHead = 0,indexTail = 0;

vector *vertices = vector_create(sizeof(Node),0);

vector *edges = vector_create(sizeof(Edge),0);

// Define test vertices

if(vertices->size!= 0)

vector_clear(vertices);

if(edges->size != 0)

{

vector_clear(0);

}

// Read source file

printf(“Loading %s\n”, filename);

//std::ifstream indat(“bigFile_big.dat”);

std::ifstream indat(filename);

char inp[100];

if(indat)